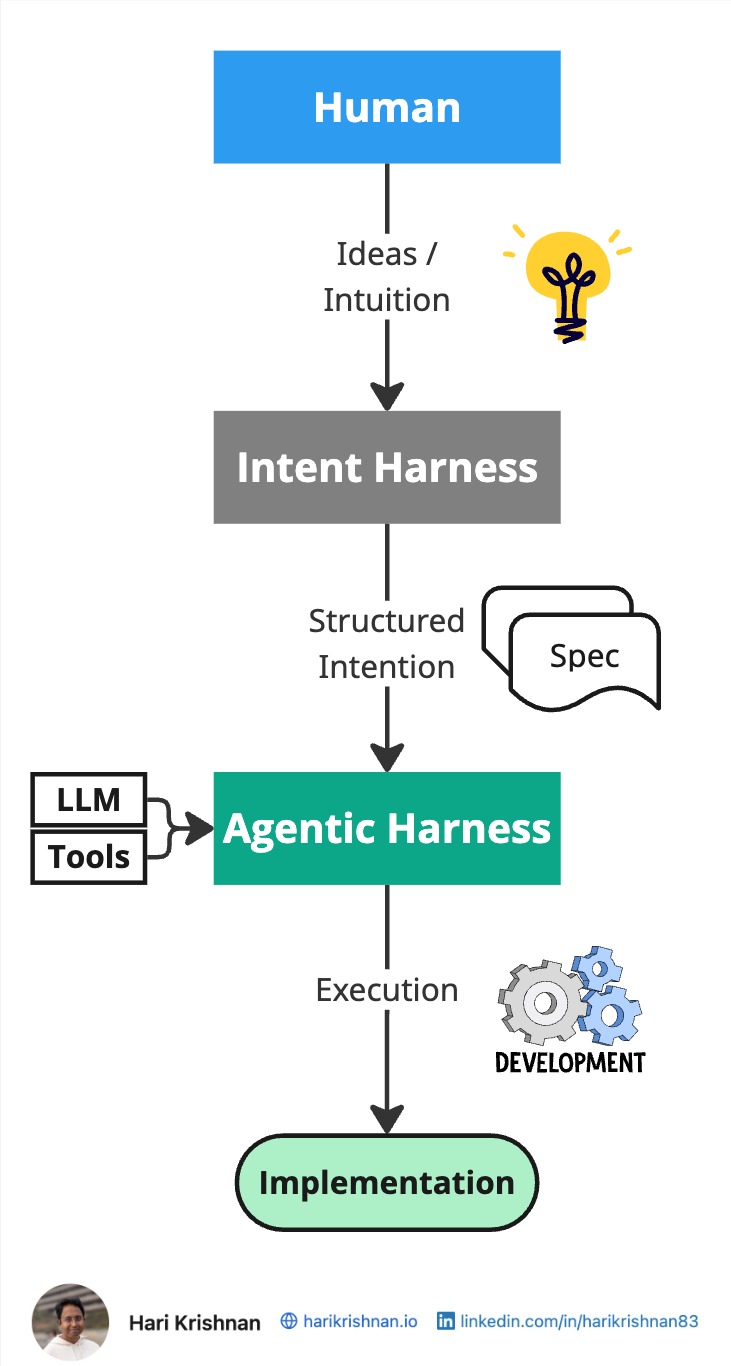

An agentic harness is the execution layer that channels LLM capability into useful work. It does this by combining the model’s reasoning with tools, workflow control, memory, and guardrails. For sustained autonomous execution, it relies on sufficiently structured context that makes intent, constraints, and success conditions explicit. Without this structure, the agent may act in ways that are not aligned with the intended outcome. This results in constant supervision being necessary.

In order to achieve sustained autonomous execution without the risk of losing alignment between intent and implementation, we need to close the gap in shared understanding. The Intent Harness fills this void by helping transform ideas into well-articulated intent that an agent can execute with less supervision.

Intent Harness

Most useful ideas do not begin as fully formed specifications. They begin as intuition.

A team senses a feature that should exist. A product manager sees a pattern in customer behavior. An engineer notices an architectural weakness. A founder feels that a workflow should be simpler. Sometimes that intuition comes from expertise. Sometimes it is supported by data. Either way, it starts out incomplete.

That incompleteness is not a flaw. It is actually useful.

Intuition is valuable precisely because it leaves room to explore options, test assumptions, and discover better paths before committing to implementation. The problem is not that ideas begin vaguely. The problem is what happens when we send that vagueness straight into an execution system.

Without a disciplined process for clarification, the result is shallow prompting, weak task framing, and low-quality execution. The agent may still produce output, but that output remains tightly coupled to constant human supervision.

The Intent Harness exists to solve this. Being a layer above the Agentic Harness, its job is to help humans articulate intent clearly before execution begins. It achieves this by enabling dialog between humans and AI to establish shared understanding. Just as the agentic harness helps channel raw LLM capability into useful work, the intent harness helps channel raw intuition and ideas into well-engineered intent.

Spec-Driven Development plays a major role here by giving that intent a structured, reviewable form. It helps us harness intent without forcing us to prematurely lock down every detail.

That means creating a system that helps us:

- examine an idea from multiple angles

- challenge assumptions

- make constraints explicit

- define success criteria

- decide what should and should not be built

- break work into meaningful units before execution starts

Harness Engineering

If the questions we ask, the review discipline we apply, and the framework we use to think all shape the resulting intent, then the quality of that system is critical. Engineering the harness determines how well we articulate intent and how much reliable autonomy we can expect from our agents.

Weak intent engineering produces weak execution:

- specs become shallow

- task boundaries become fuzzy

- review becomes ceremonial

- agents need constant correction

Strong intent engineering produces better outcomes:

- clearer specifications

- better task decomposition

- earlier surfacing of ambiguities

- longer, more focused independent agent execution

This is why the conversation around AI-assisted delivery should not stop at model capability or coding-agent ergonomics. The real leverage increasingly comes from the quality of the harness that shapes intent before execution starts.

Looking at it from this angle, how we interpret bugs, mismatches, and execution failures also changes.

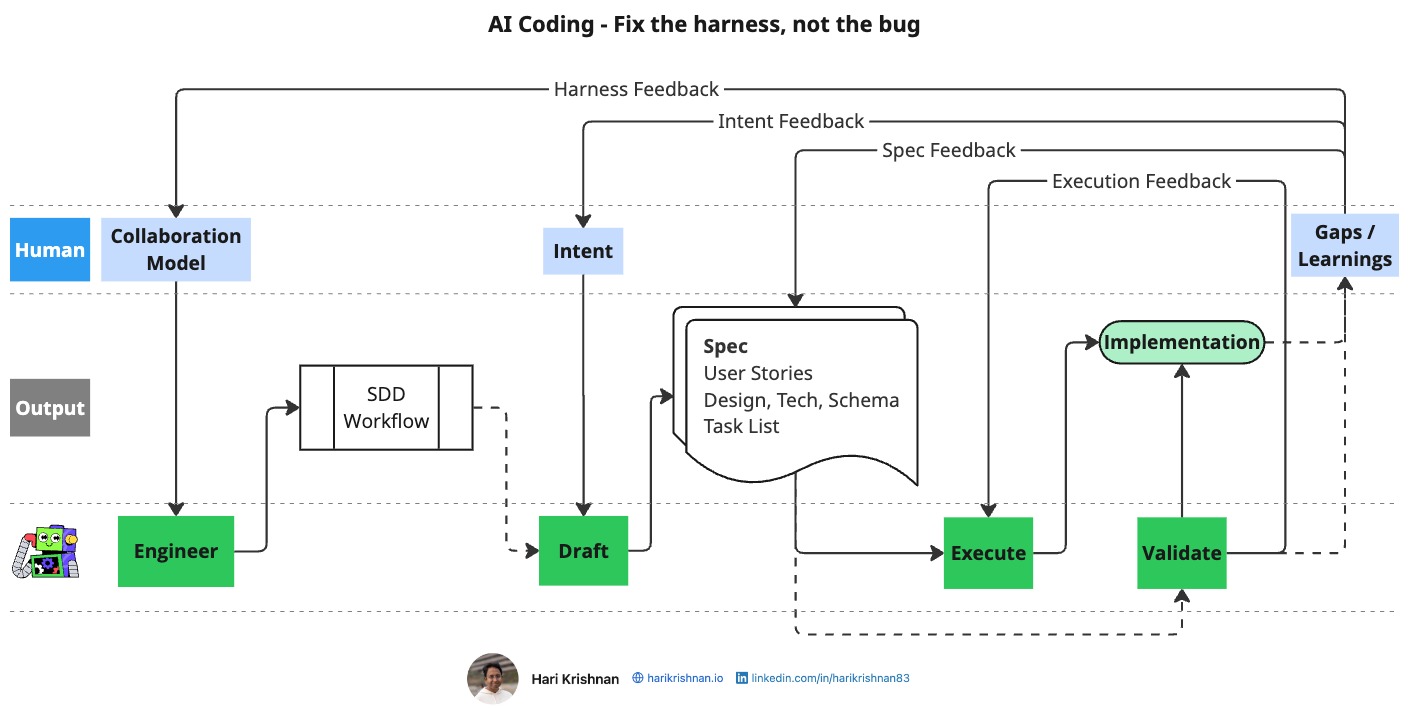

Image from my InfoQ article about Spec-Driven Development Adoption at Enterprise Scale.

A defect in implementation is not always just an implementation problem. Sometimes it is a signal that the harness itself needs improvement.

For example:

- a bug in code may point to a gap in the spec

- a gap in the spec may point to insufficient human review

- insufficient review may point to a pattern of high-volume, low-quality specs being generated

- the structure and quality of those specs may ultimately indicate a weak workflow

Seen this way, execution failures are not only quality issues in the output. They are also feedback on the quality of the system that produced the output.

That is an important shift.

It means we should not ask only, “Why did the agent get this wrong?”

We should also ask, “What in our elicitation, specification, review, or validation process allowed this ambiguity to survive?”

This is where the harness metaphor becomes genuinely useful. The purpose of the harness is not just to make the agent productive. It is to make the overall human–AI system learnable and improvable.

Closing thoughts

A good Intent Harness should do more than help teams generate artifacts. Its primary responsibility is to enable human and AI collaboration that helps elicit intent thoroughly, encourages meaningful review, and ultimately ensures alignment between intent and implementation.

This post is a living document. I intend to continue updating it.